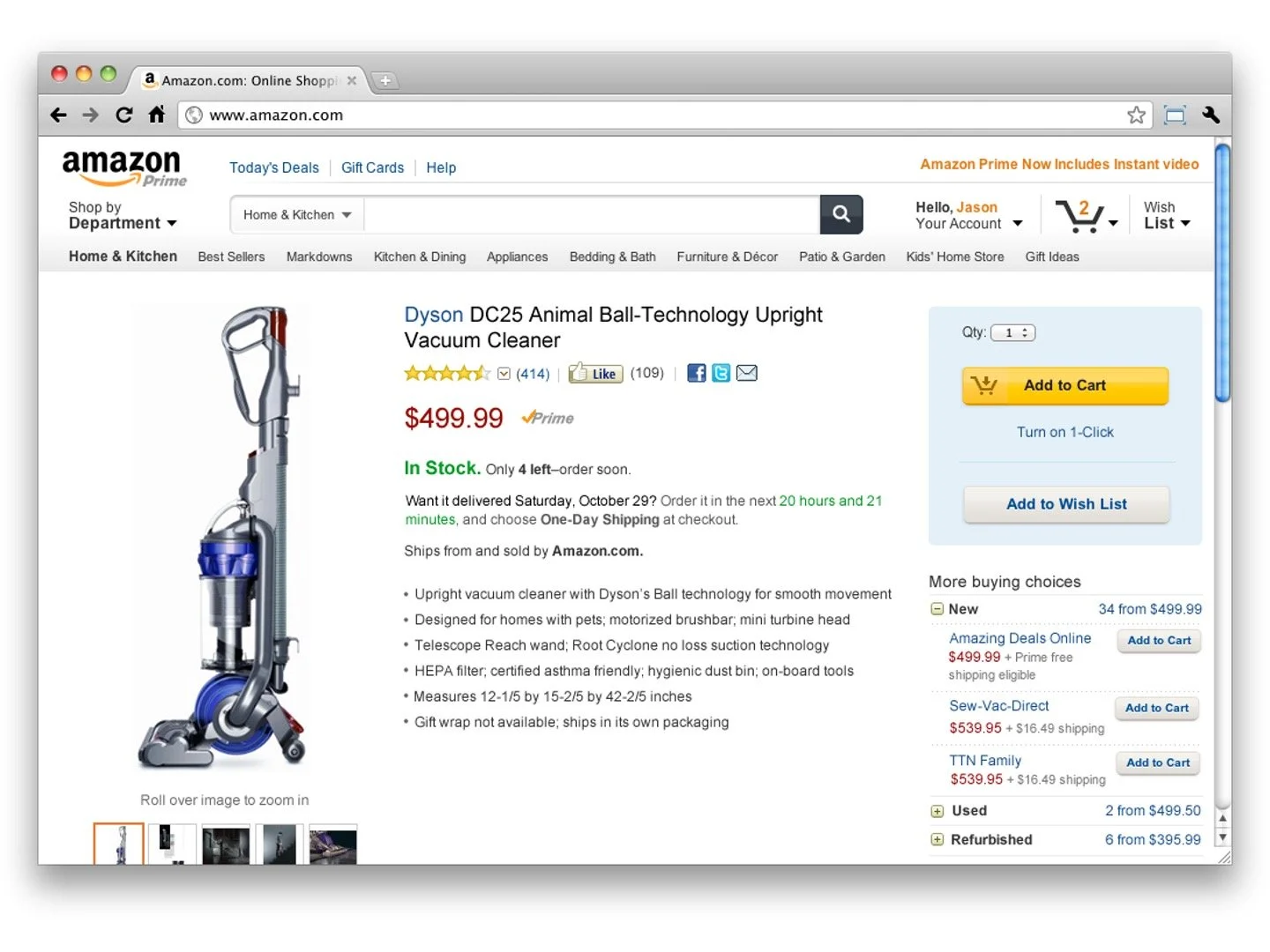

Universal Detail Page

The Universal detail page was the first project that I drove as the lead for product evaluation at Amazon. The product detail page at Amazon is deceptively complex. Not only is it one of the most viewed pages in the Amazon experience, by a long shot, it's also one of the most engaged with properties on the world wide web. To support the number of user needs it does, at that breadth, the page itself is constructed as a combination of ~450 individual features, across numerous teams, inside one of many flexible layouts. The combinations of these elements permute based on a number of factors, such as location, department, device, and past activities. The Universal Detail Page Project was aimed at trying to tame the sprawl that occurs when you have a page architected in this fashion, as well as set up a proving ground for our newly developed design system, AUI.

What were we solving for

The UDP project comes at the confluence of two major tipping points in the product evaluation space. Firstly, the dynamic of small teams and individual ownership, coupled with the detail page’s slot based construction had allowed the detail page experience to get well out of hand. This led, over time, to individual page experiences that were demonstrably over-featured, inconsistent, and fairly convoluted. This wasn’t just conjecture. There were multiple markers pointing to these things, and the negative effect they were having on both customer experience and revenue; blog posts, usability data, internal feedback, qualitative testing, and direct letters to the CEO, just to name a few sources.

The second factor at play was the inception of our design systems team, which was just recently stood up, and tirelessly working on the first incarnation of the Amazon Design system (AUI). Considering the scope, scale, and dynamic nature of the detail page, leadership believed it was a good opportunity to, not only test and refine the new design system in a real-life scenario, but also mitigate some of the issues relating to sprawl as we were doing it. The entire project took about one year, interspersed with other initiatives the the team was doing, both internally within the team and in partnership with sister teams. A good portion of this work was done in collaboration, either as primary customers to the Amazon Design System, as central owners of Amazon’s product evaluation properties, or as peers to the other core Amazon properties. That process involved hundreds of meetings and syncs, multiple trips to Europe, India, and Asia, and many long hours iterating with our partners in the lab. That’s because, in the end, the overall initiative wasn’t just about achieving our measurable goals, but also about working across the organization to come to consensus on the rules, approach, and principles that governed the product evaluation space.

Goals

Improve consistency across the full Amazon detail page feature set. Consolidate UI patterns where possible.

Serve as a testing ground for, and space for iteration on, the Amazon UI framework, before it rolls out to the rest of the organization.

Identify and make improvements to individual features and detail page templates, as determined through UTesting and A/B testing.

Ensure detail page feature parity between all of the device types Amazon supports.

Maintain or improve total ordered revenue while making these changes, as determined through A/B and multi-variate testing.

“Get rid of the clown barf.”— Jeff Bezos

The work

Approach

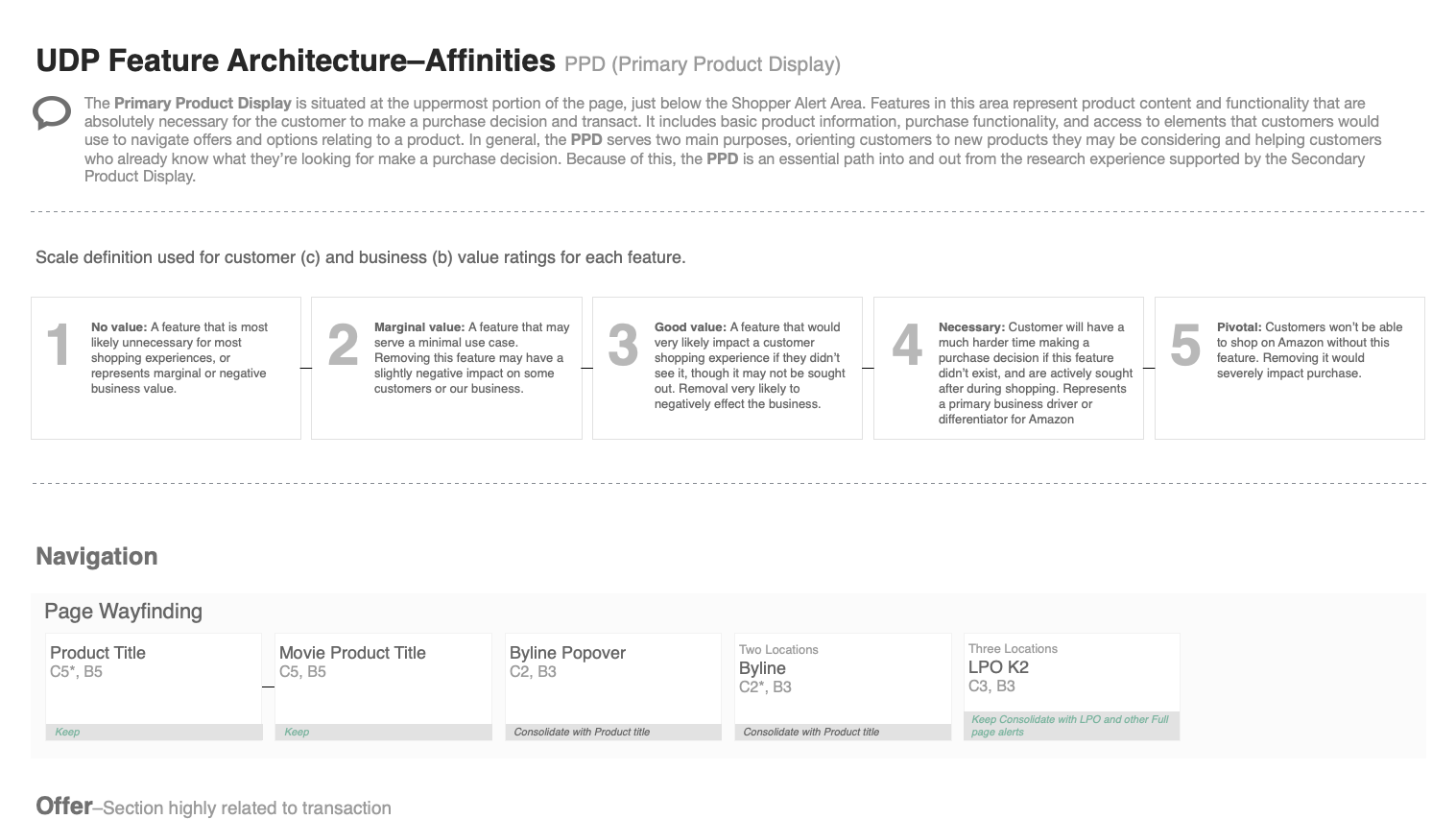

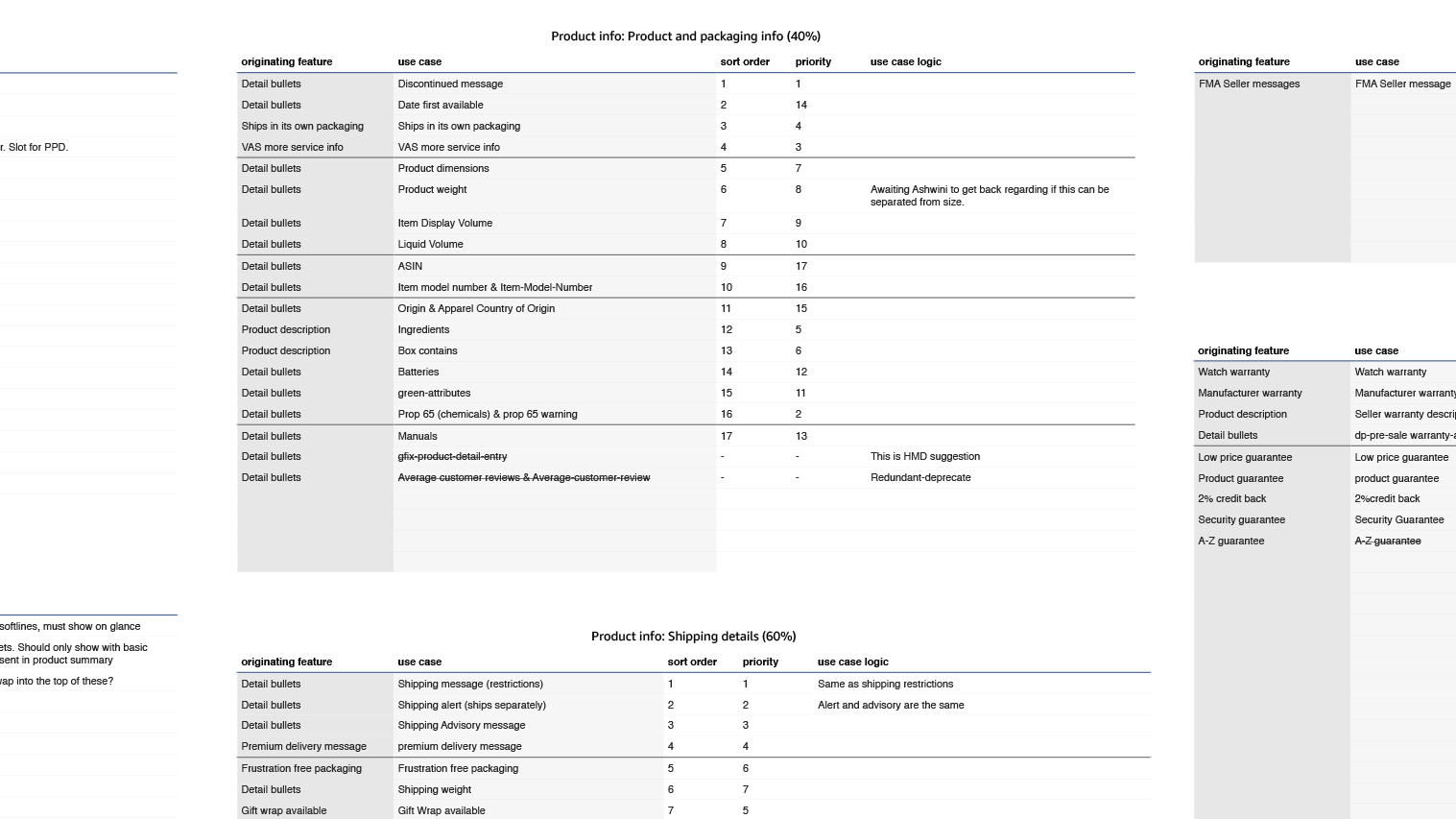

The main brunt of the early work involved understanding what the lay of the land looked like generally and, more importantly, who the responsible parties were for each parcel of it. Of the ~450 features available to be displayed in a given detail page, only about 200, or so were centrally owned by my core team, and so work had to be done to identify the remaining ~250 features and the teams responsible for their upkeep and maintenance. A good portion of those were easy to track down, just because they represented department specific functionality, such as Kindle, or soft lines, or automotive, but a good portion were also small features owned by teams for nice purposes, such as points counters for the Japanese market, or a pharmacy locator in the UK. There were also new feature requests coming in from teams all the time, so the first step was to start collecting these elements into a central space so that they could be tracked managed and iterated on across teams.

The bulk of this collection process occurred within two individual workstreams. The first workstream involved a lot of digging into the page architecture itself. A lot of this involved work on the part of technical program management to both locate all of the various detail page templates that existed across Amazon, debug them to uncover their features, and then locate the owners of those features within the organization. A lot of this work was fairly painstaking, since detail page templates could easily be branched off of the core template, and nothing was stopping teams from taking that approach, even though it was frowned upon organizationally. There was also a large amount of stuff, templates or features, that had no explicit owner, either as the result of failed experiments, organizational changes, or just history. There were very few folks at Amazon who had a tenure at the company dating back to the 90s, so a lot of history had to be uncovered as well just identifying things, understanding them, and, if necessary, rehousing them.

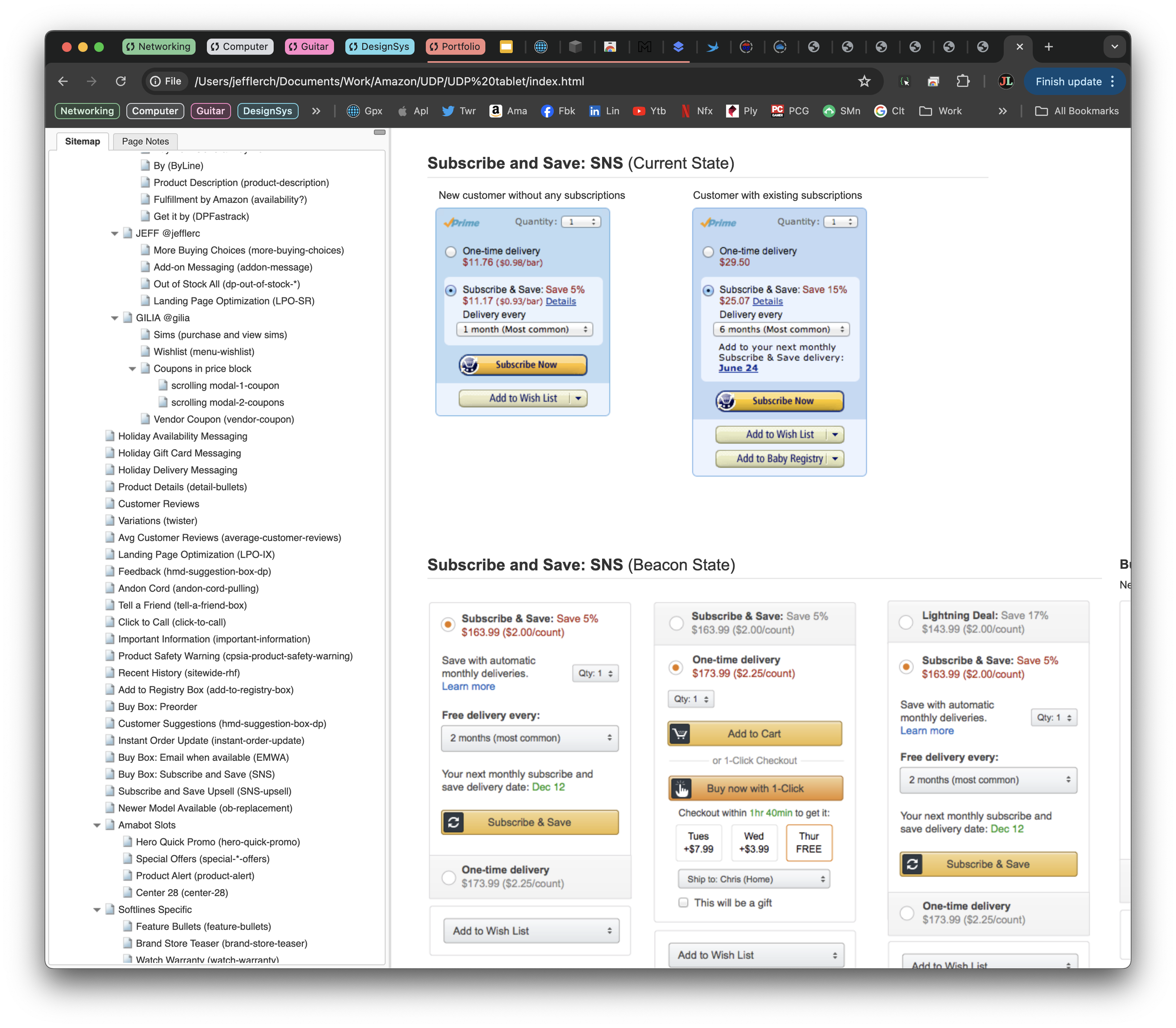

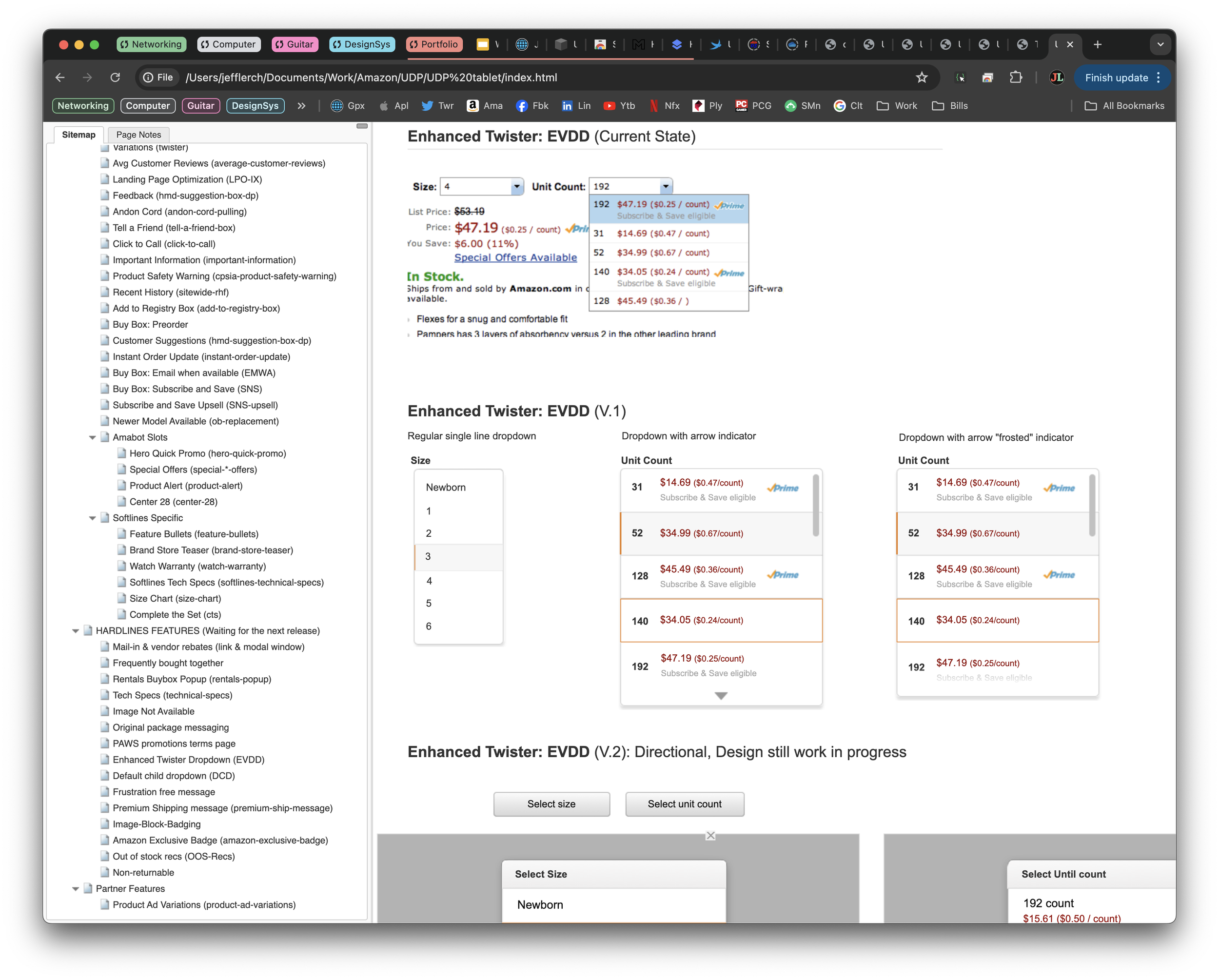

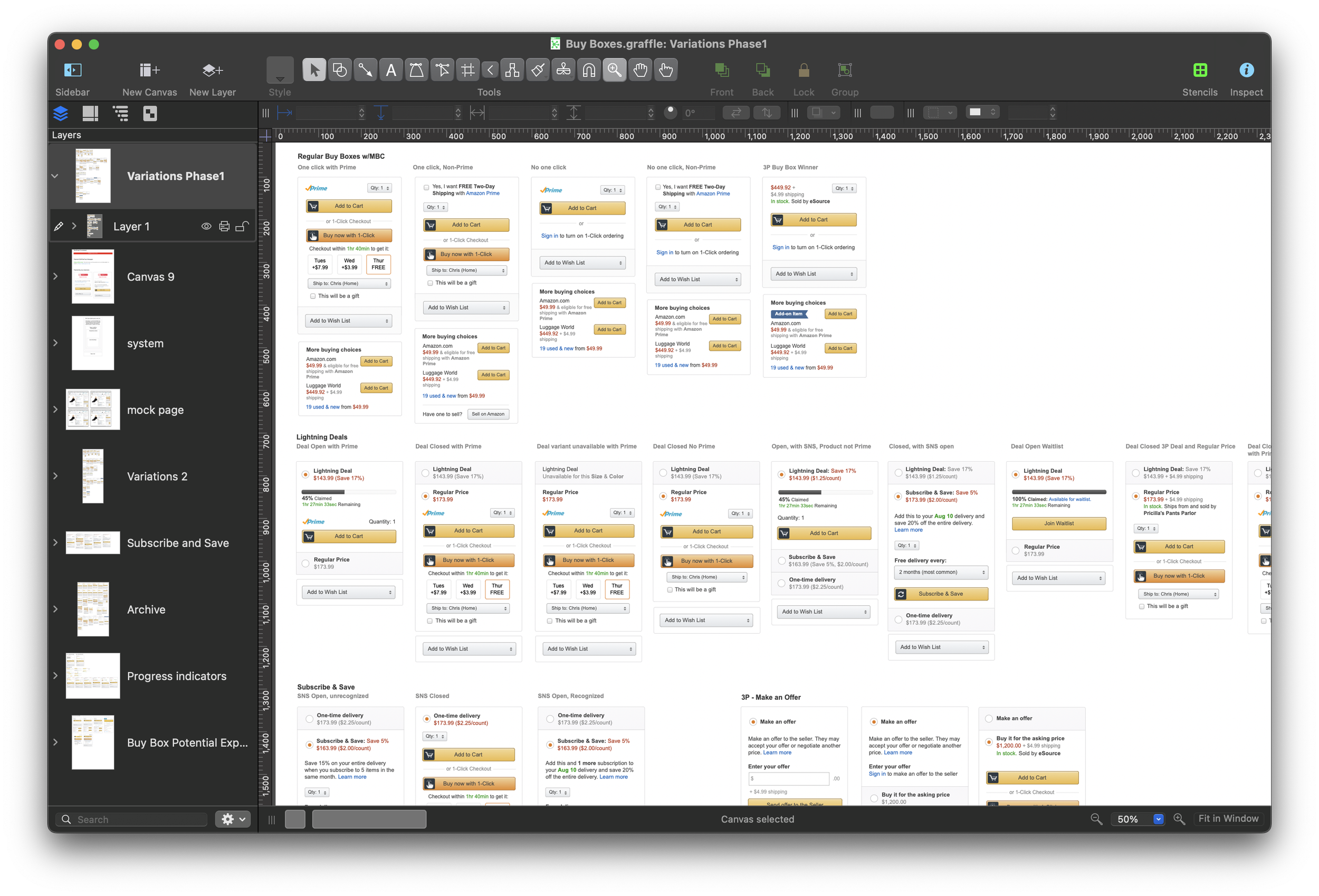

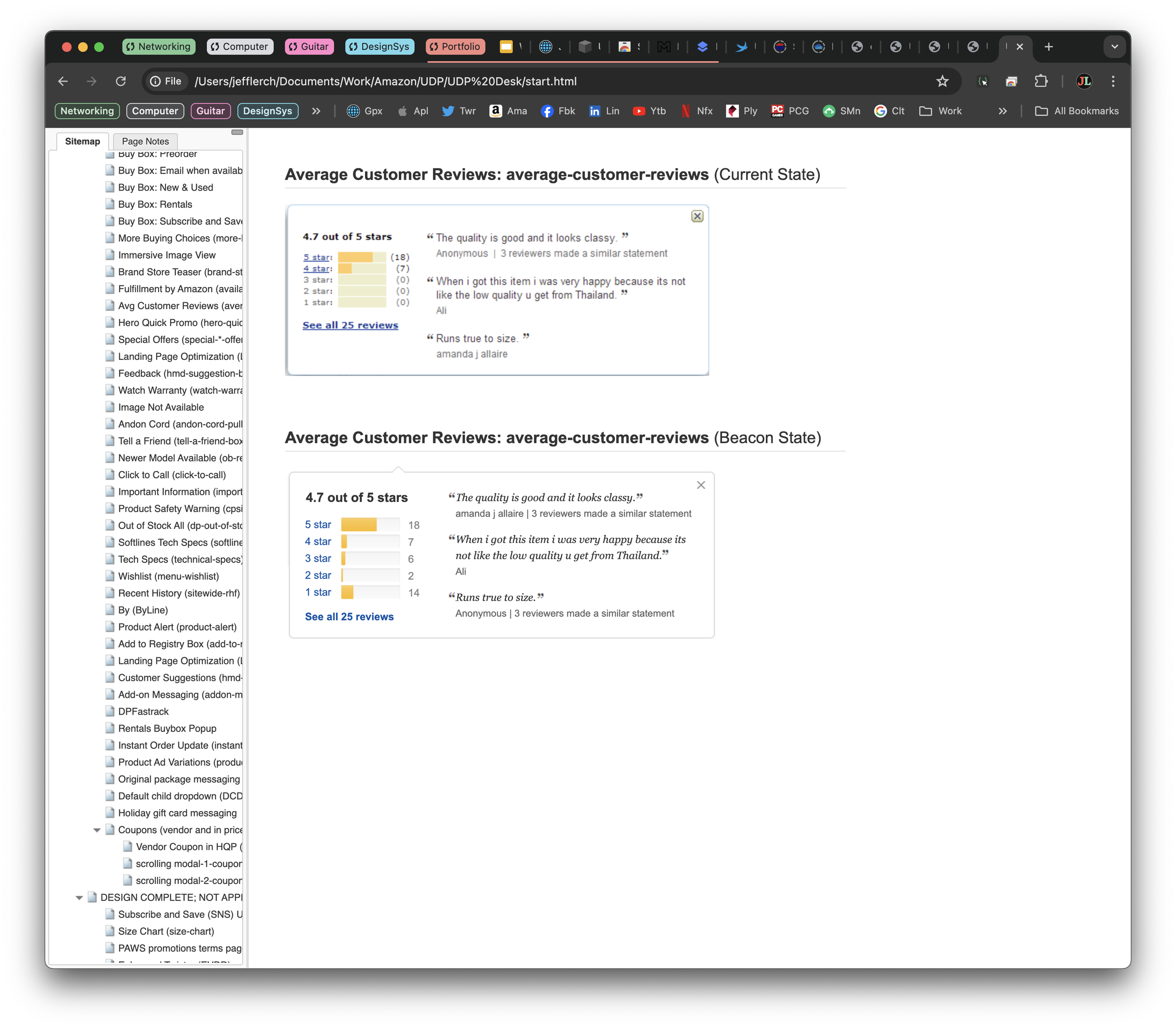

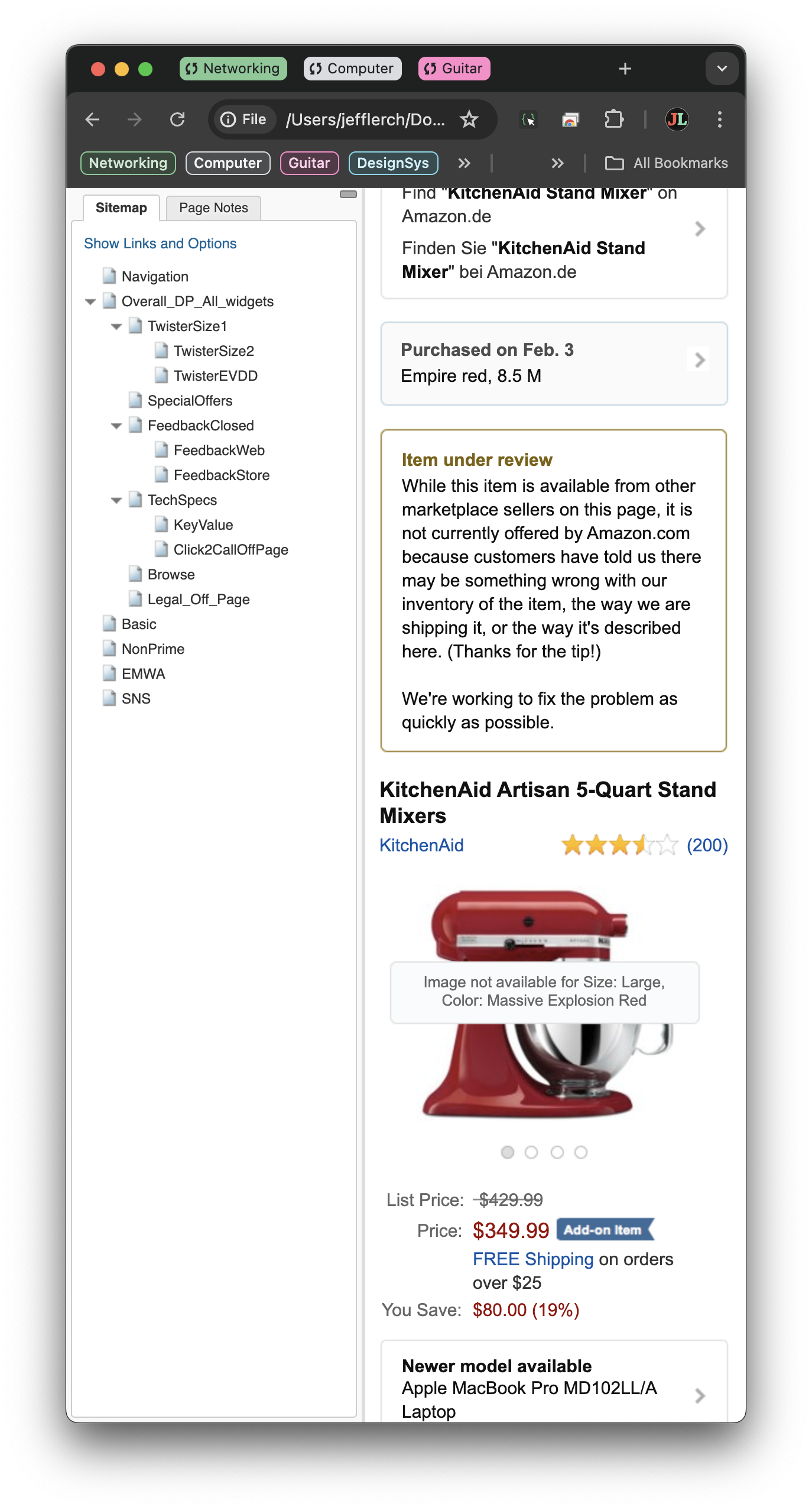

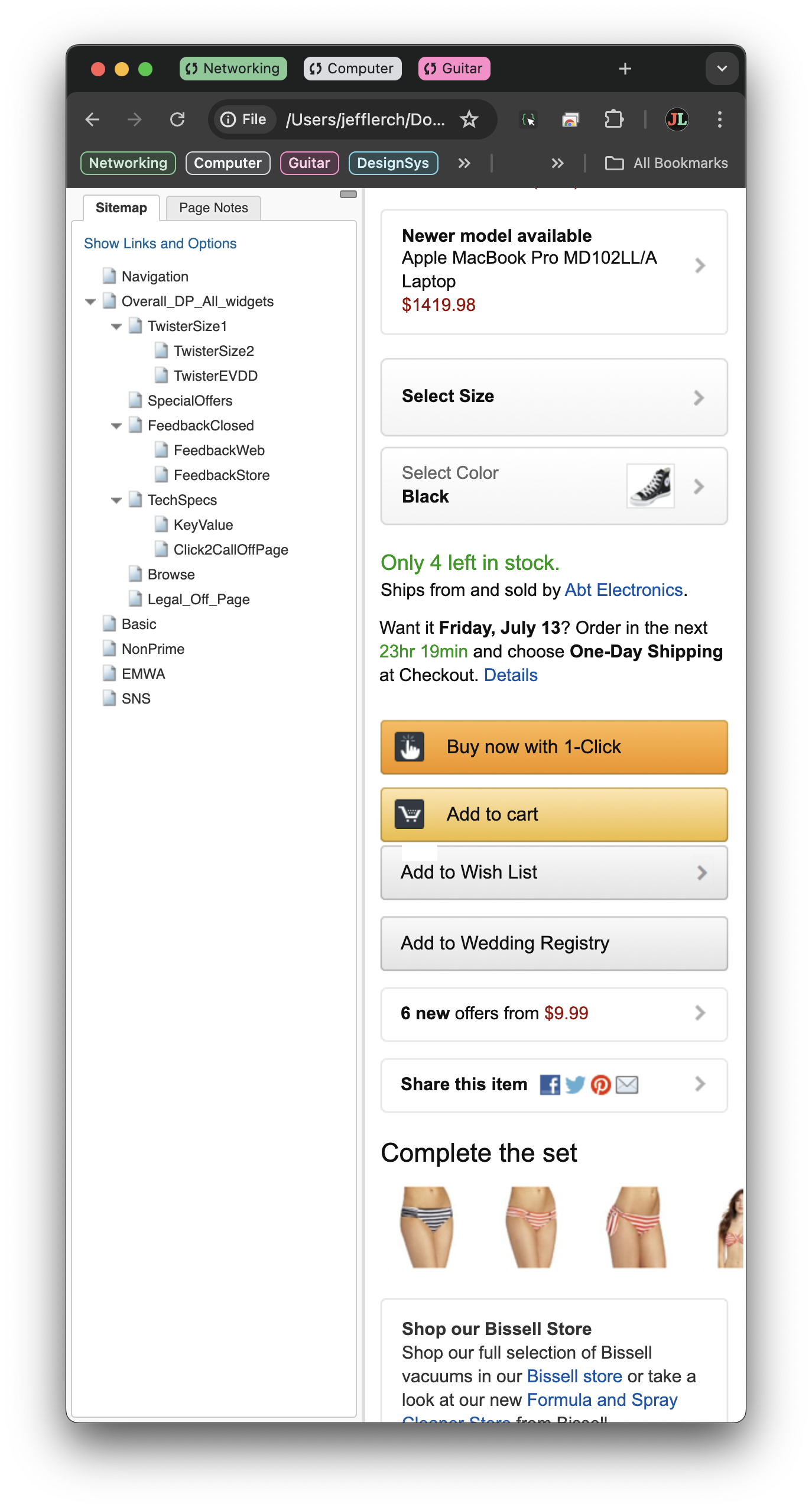

While this process was ongoing, the design team needed to figure out a way to track all of these items, ensure the necessary updates were made, and manage and testing or iteration as they moved through the design process. In order to accommodate this the team decided to leverage a centralized AxureRP repository to house all of the features that were coming in and iterate on them across the organization. Design teams at Amazon were, at the time, allowed to essentially use whatever they felt best met their needs, and very few actually used Axure to do their design work (Adobe CS and Sketch were the most popular). Still Axure was available to everyone via service management and, at the time was the only product that allowed multiple people to commit changes into a central design file. Most didn’t design in the tool, but the tool supported pages, and each page had the ability to accept a number of different image files and modify or add to them from within the tool.

So the bulk of the work occurred as the result of those two efforts. The design team set up pages in the the Axure file for each known feature. The top of each of those pages contained a template with the technical and no-technical name of the feature, a screen-grab of it, its owner, and any information about it relative to the business. My team kicked off the work with our first 200 features, working with the AUI team to refine things on both ends in order to build out a shared set of assumptions for how things should work. As that was happening, the PMs, TPMs, and I would add any new use cases we uncovered into the shared repository and begin working with the design owners for those features to get updated version of their features into the shared Axure file. The work over the year largely followed this path of both adding to the list of features needing conversion, and then resolving them.

Collaboration

If a lot of what I described in the section above sounds like a typical backlog management process, and that’s because it essentially was. That said, a major confound for the team in leveraging that approach was that Amazon was never really set up to work that way. Individual teams typically had control over how and what they ticketed, and only a small portion of teams handled UI work inside their backlogs, essentially making it a black box to most designers. Working together as a single unit was fairly new to most of the designers outside of the core team, which outnumbered us by quite a bit. Progress forward as a company often felt more like an amoeba seeking out sunlight than a river flowing to the ocean.

Because of this, outreach became a very important aspect of the project for everyone on the team. As new features were identified I would reach out to the design owners (not always designers), explain the project, what we needed from them, and then work out a process and schedule with them to deliver designs and, ultimately, the completed feature by the promised date. Onboarding new teams was a good part of the work, but so was introducing them to the new design system, ensuring they understood the paradigms and rules for the space, and ensuring I understood their needs as well. This was especially important for smaller, or more localized teams, who typically worked in a way that was very removed from the core organization.

These interactions were a large part of my work through the project, along with the designers on my team and my partners in the business. Despite that effort, it was an investment that paid off in a number of different ways. Not only were we able to keep progress on the day to day project, but it also had a dramatic effect on knowledge share across the design organization. Designers also learned they had a central point of contact they could reach out to if needed, while I had a growing list of referrals that I could use to better serve them. This dynamic served us well, even long after the project, when it came to commitments, considerations, and just general awareness across design.

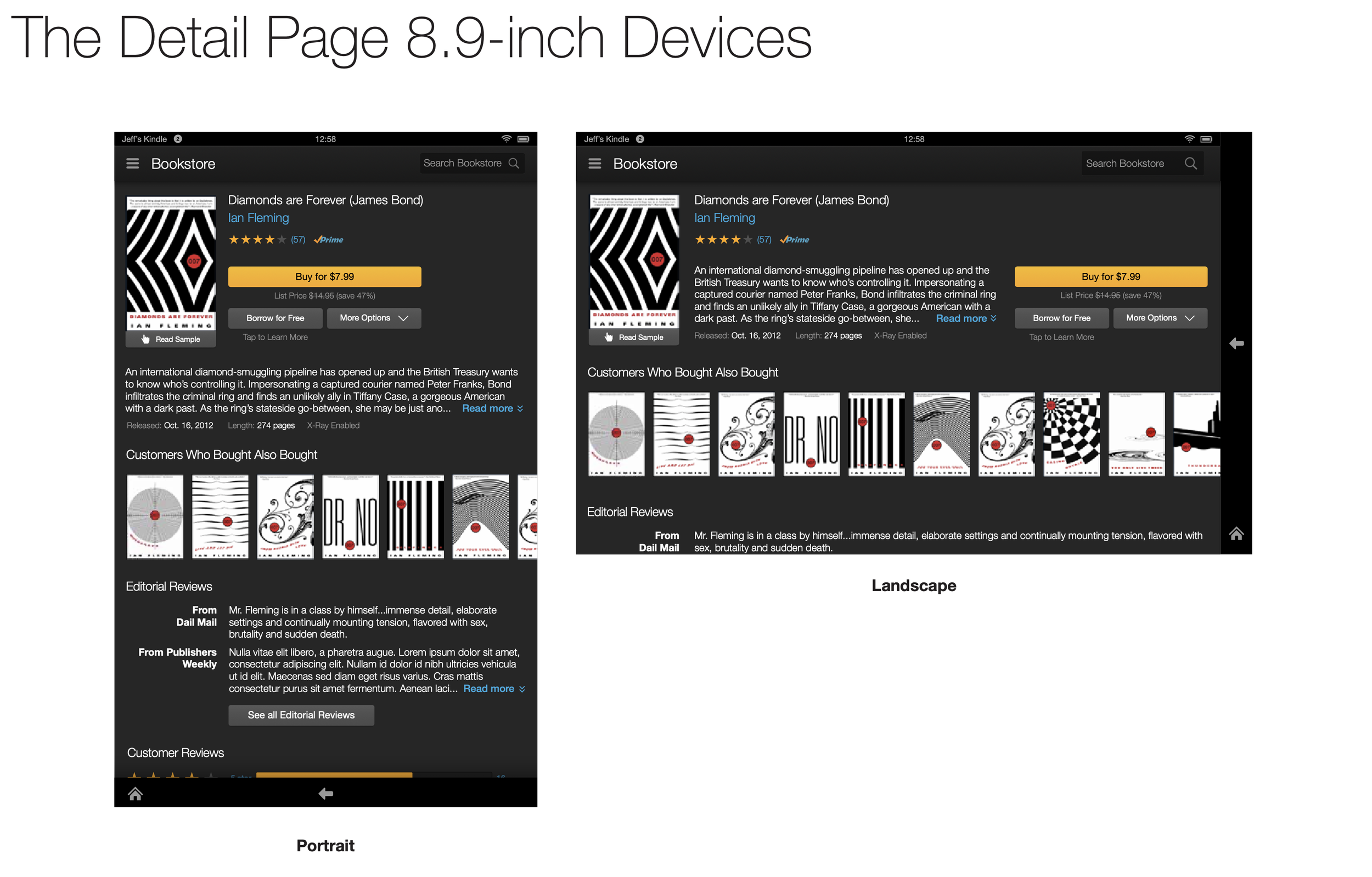

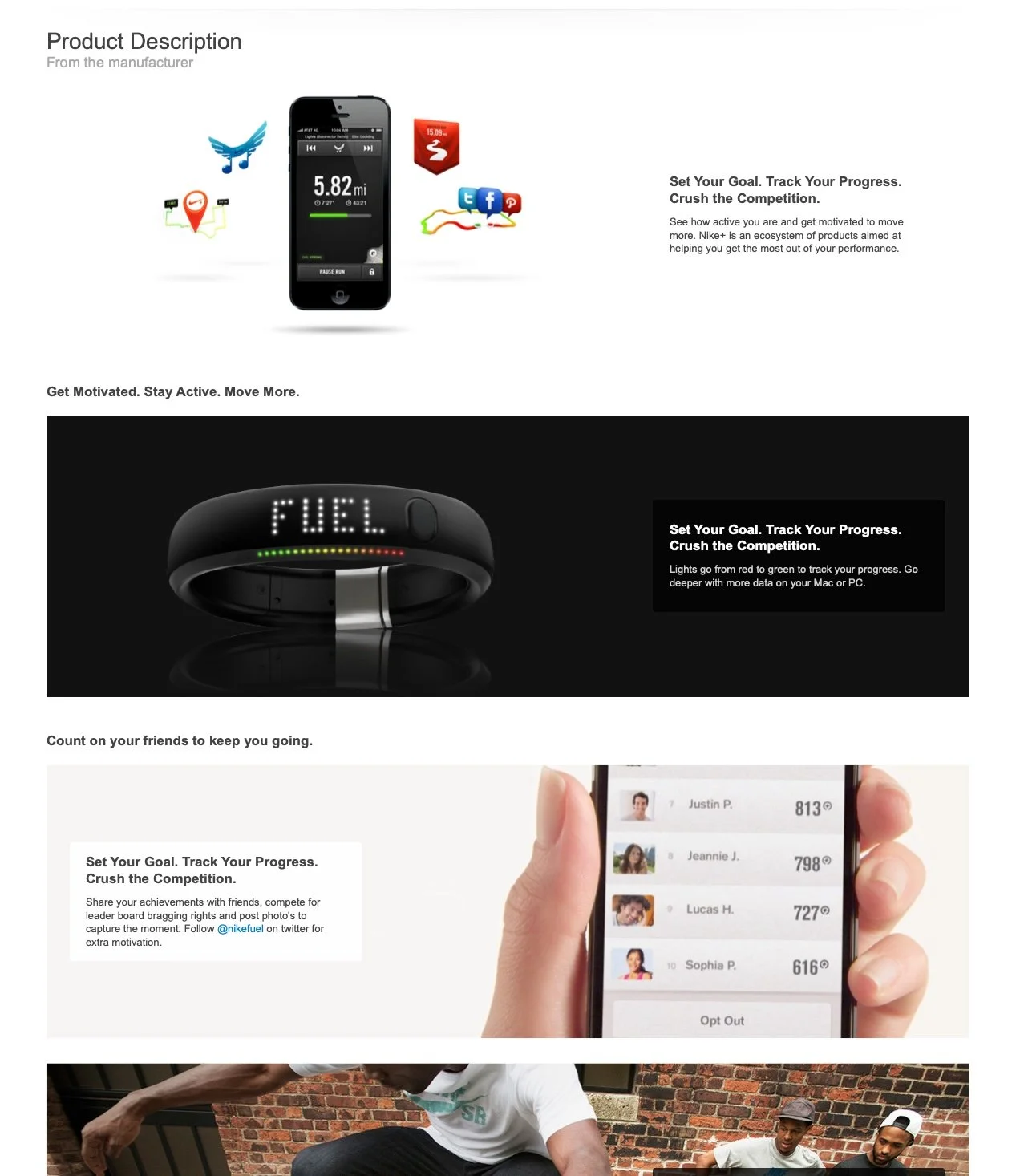

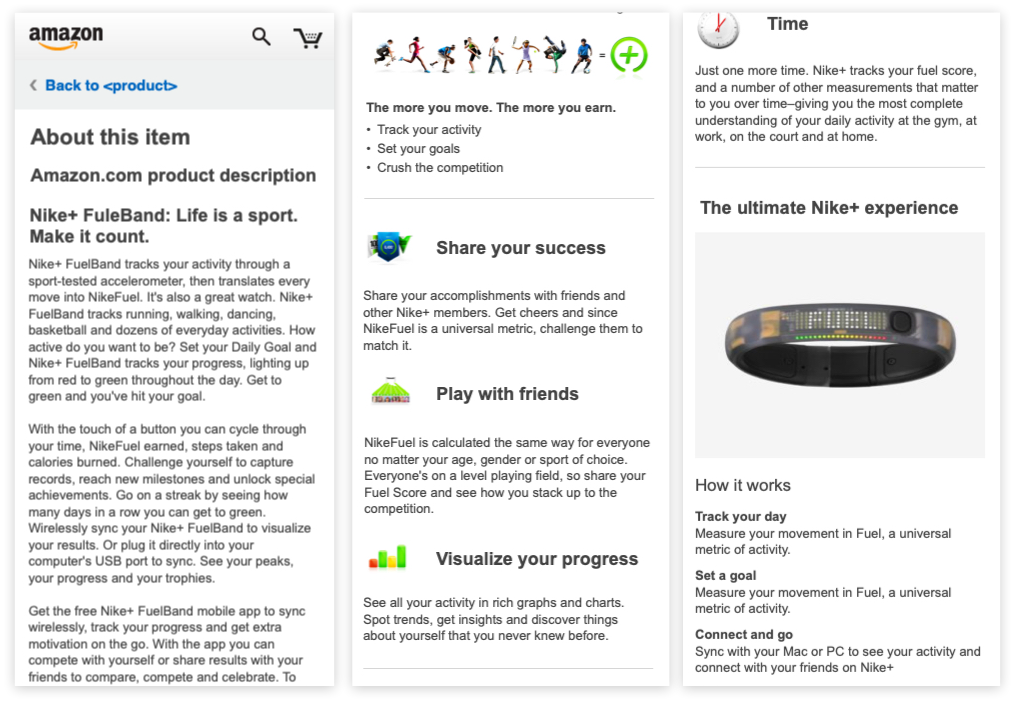

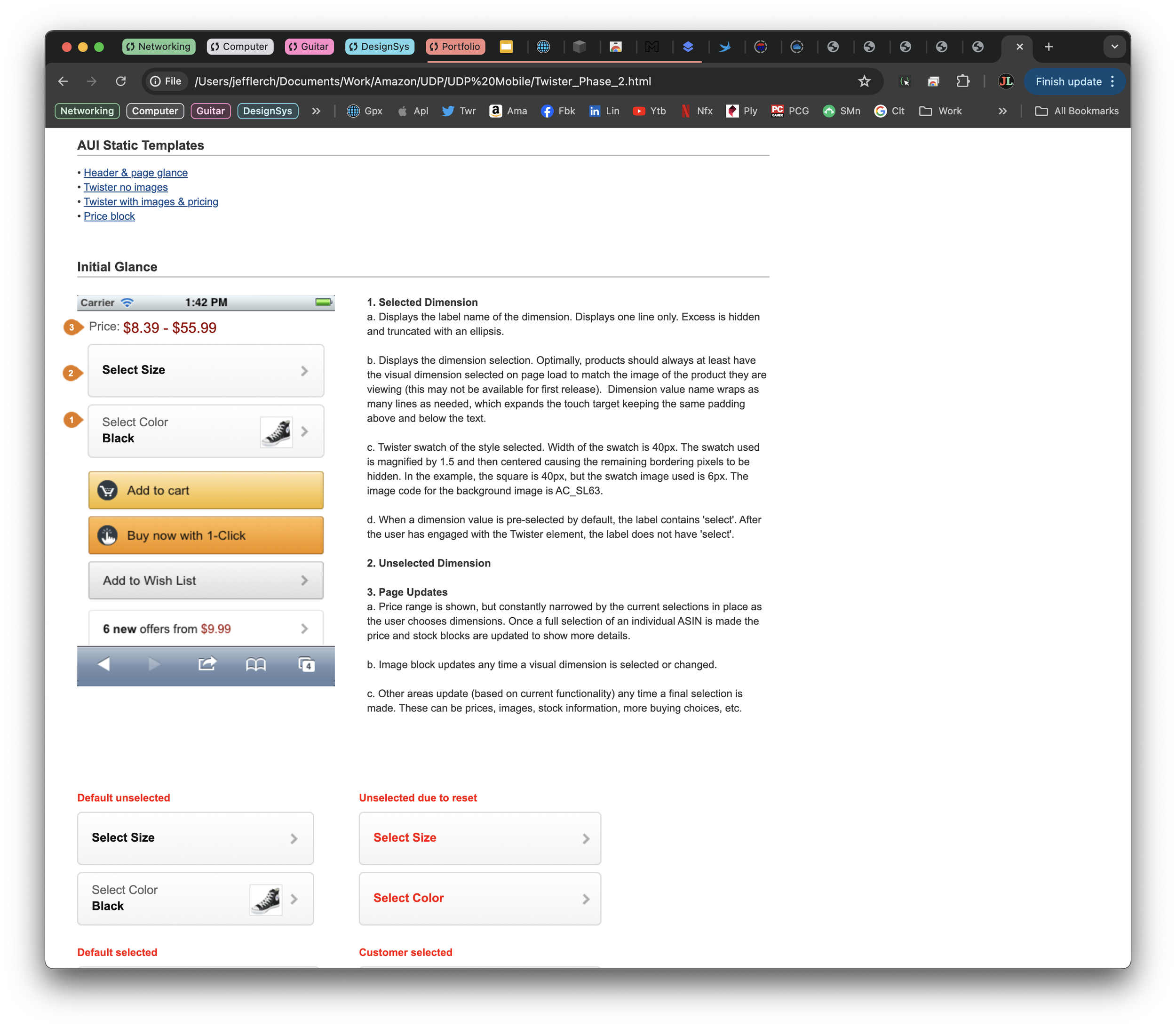

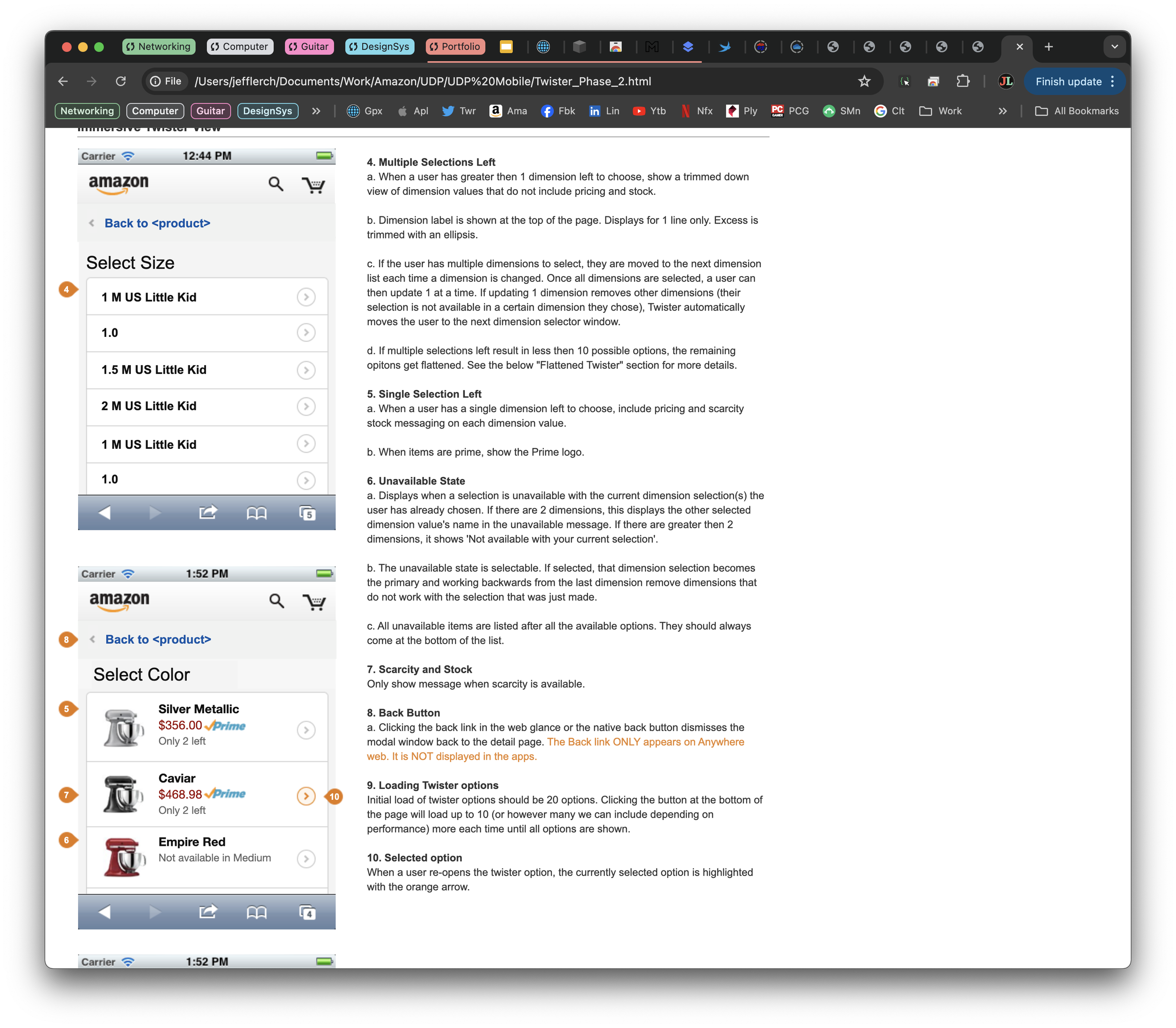

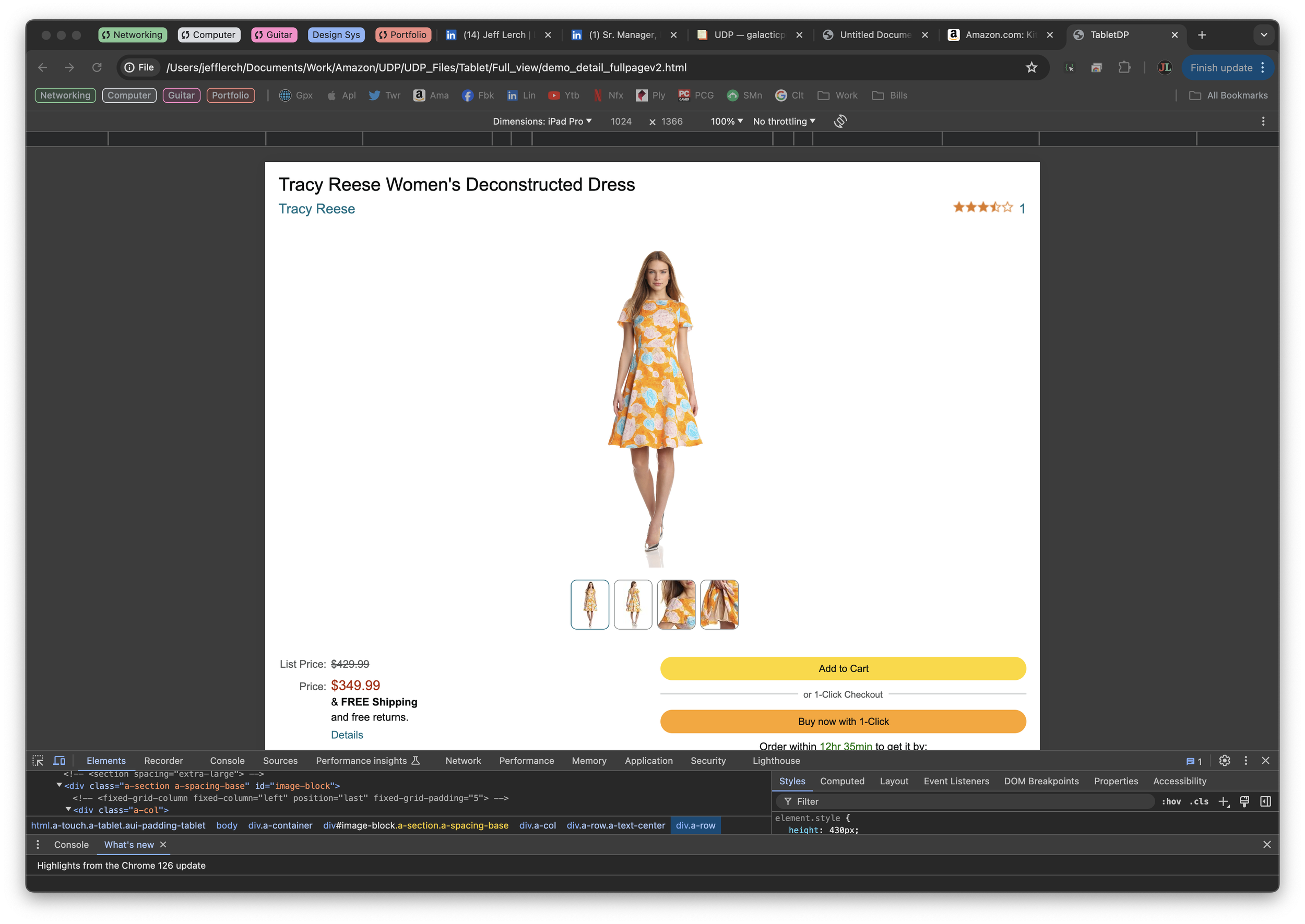

Contexts

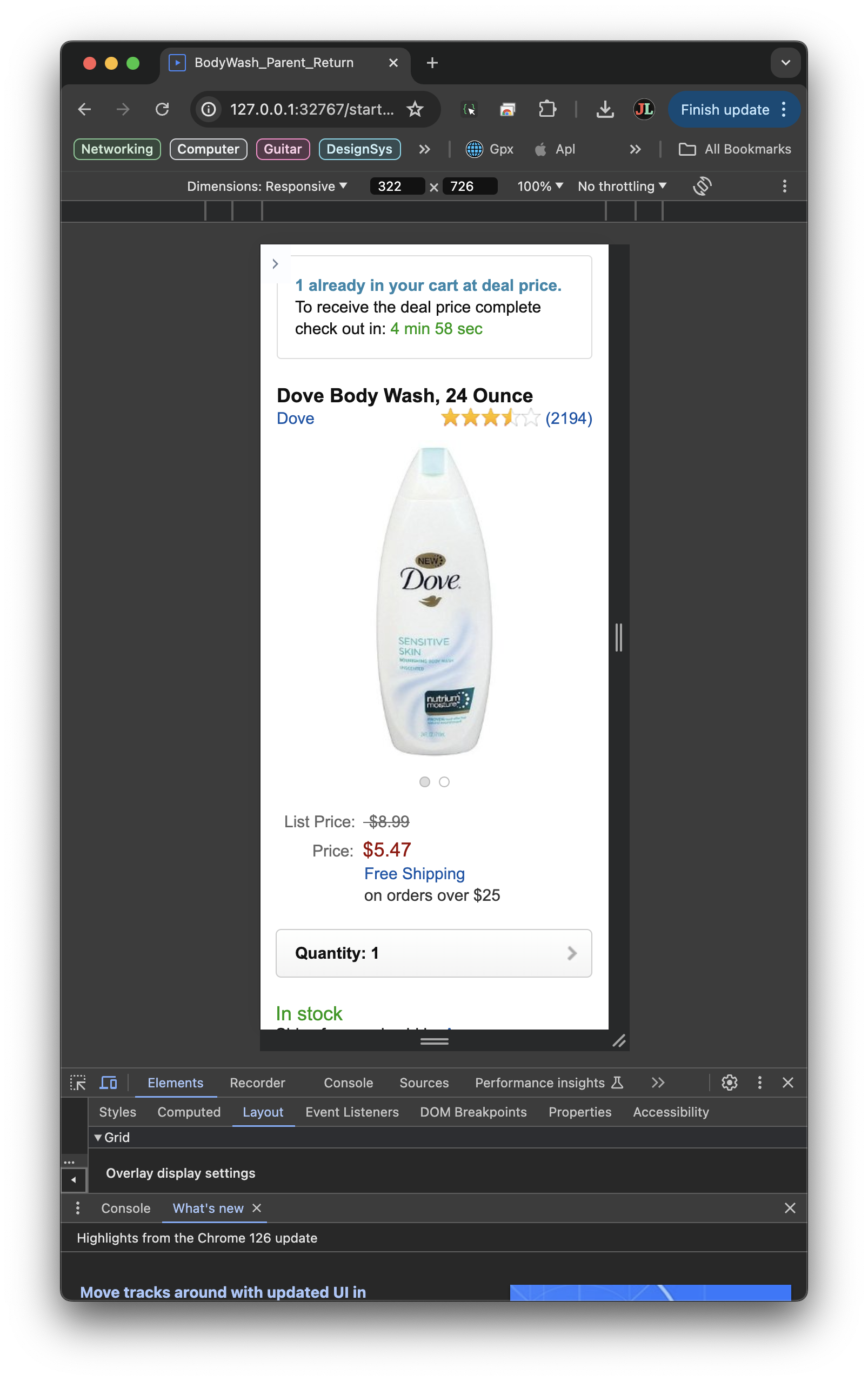

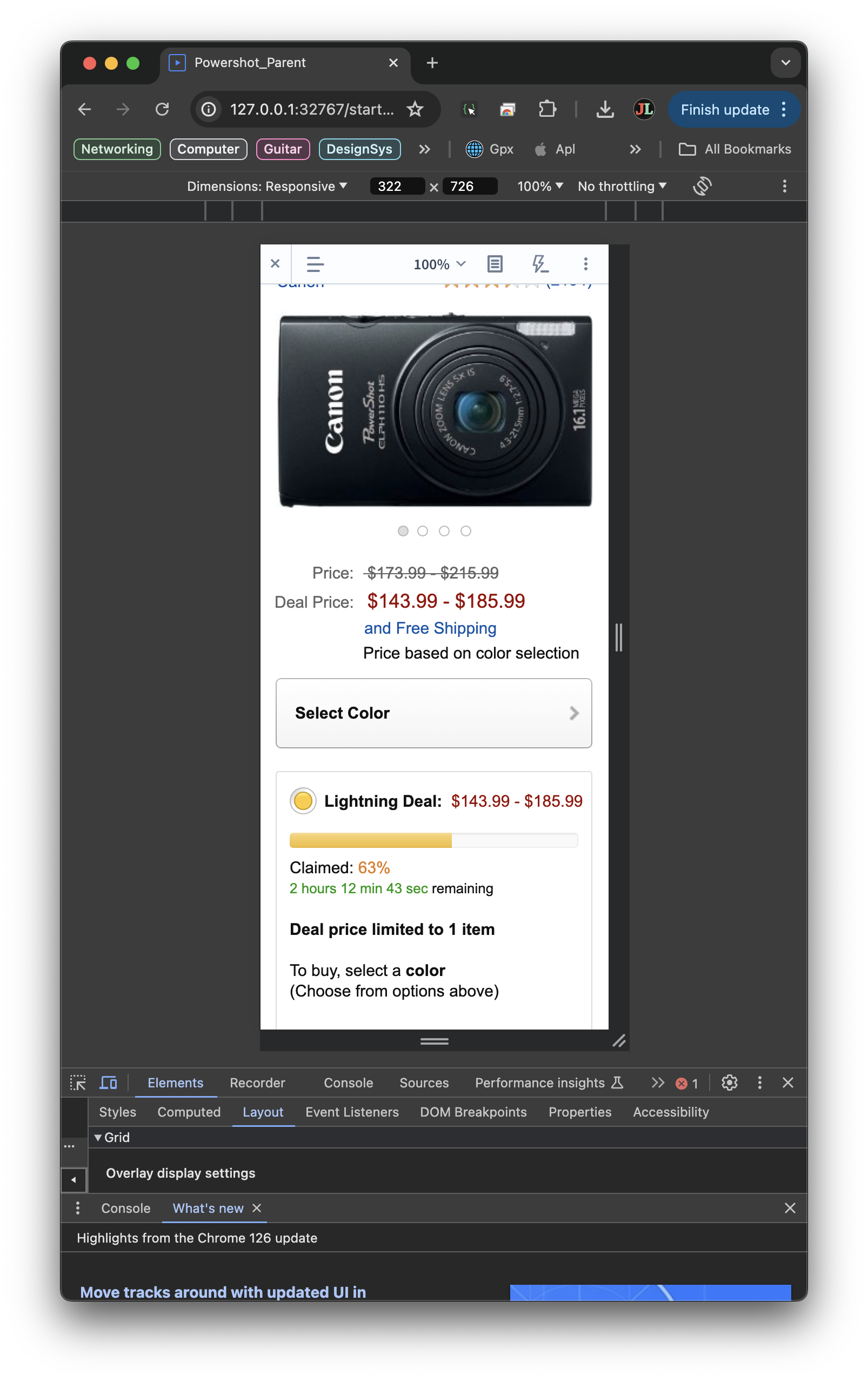

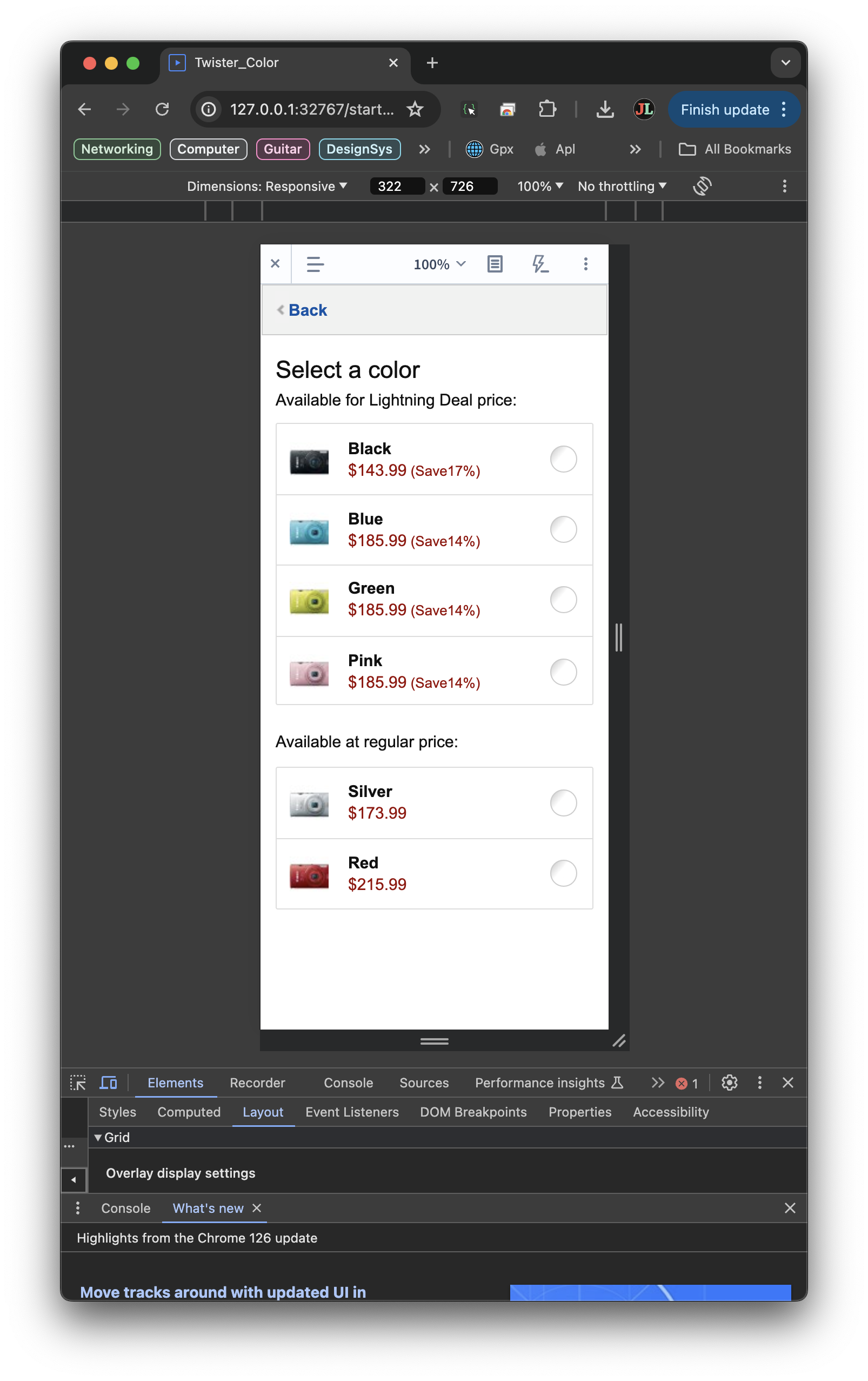

Designing for different contexts was also a key aspect of the feature work that the team was doing. Amazon detail pages exist across a number of different spaces, devices, and contexts and each one needed to be carried over to the new design system. Furthermore the team had a secondary goal to ensure that every detail page, across each device context was at parity with the main desktop experience. This included not just native mobile and tablet, but specific contexts as well, such as 5 years worth of Kindle devices and the then-unreleased Fire Phone experiences. Since the majority of the feature experience existed on the desktop, the team started with the feature translation there and then moved over to the other devices. Most of this was split up between the team. One of the designers on my team was a previous Kindle team member, and so she took over most of the translations there. I took on the mobile work, as we were essentially co-creating those patterns for consumption into the design system. Other, more novel contexts, like embedded pages, voice, and unique devices were handled by another member of our team.

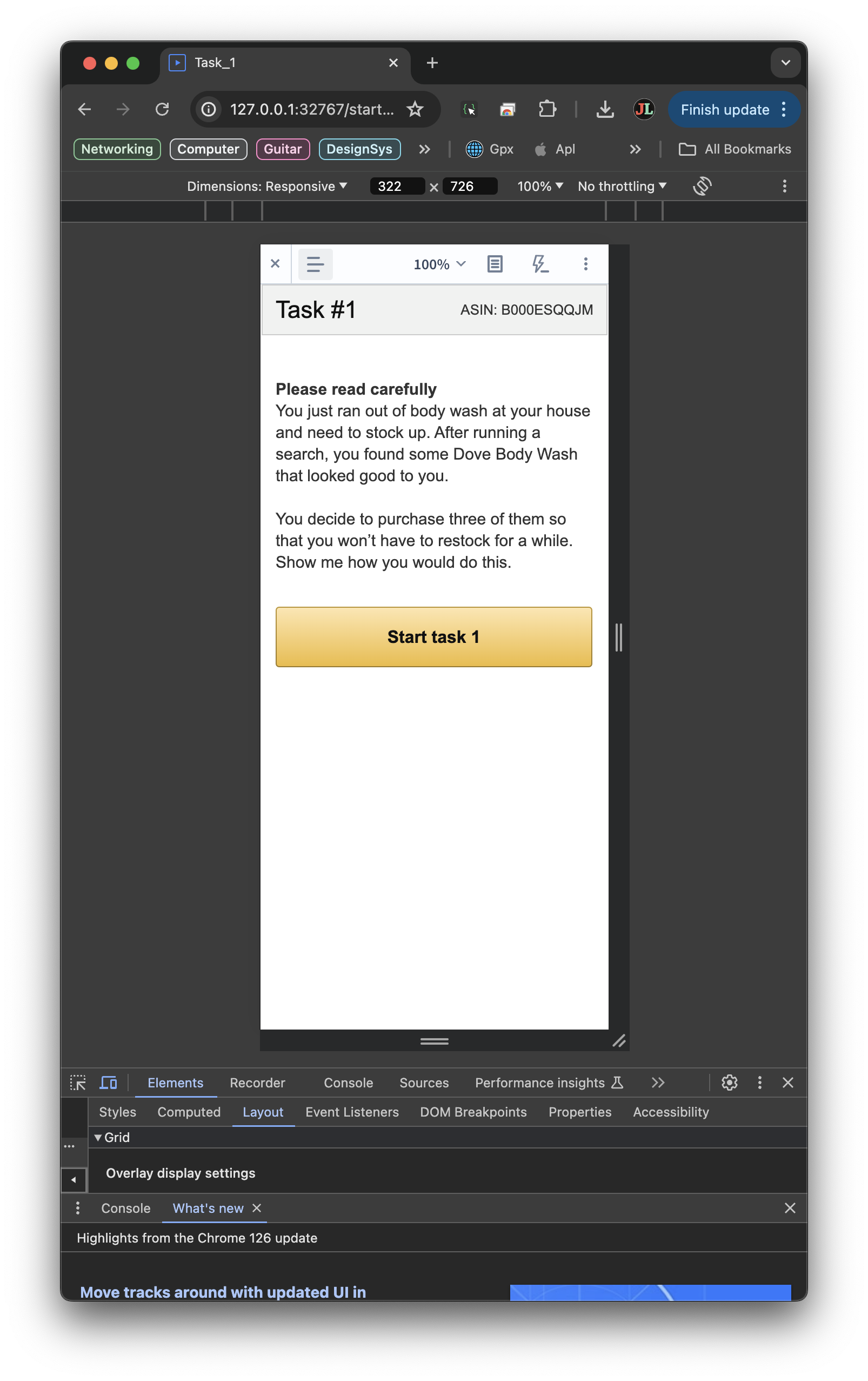

Testing

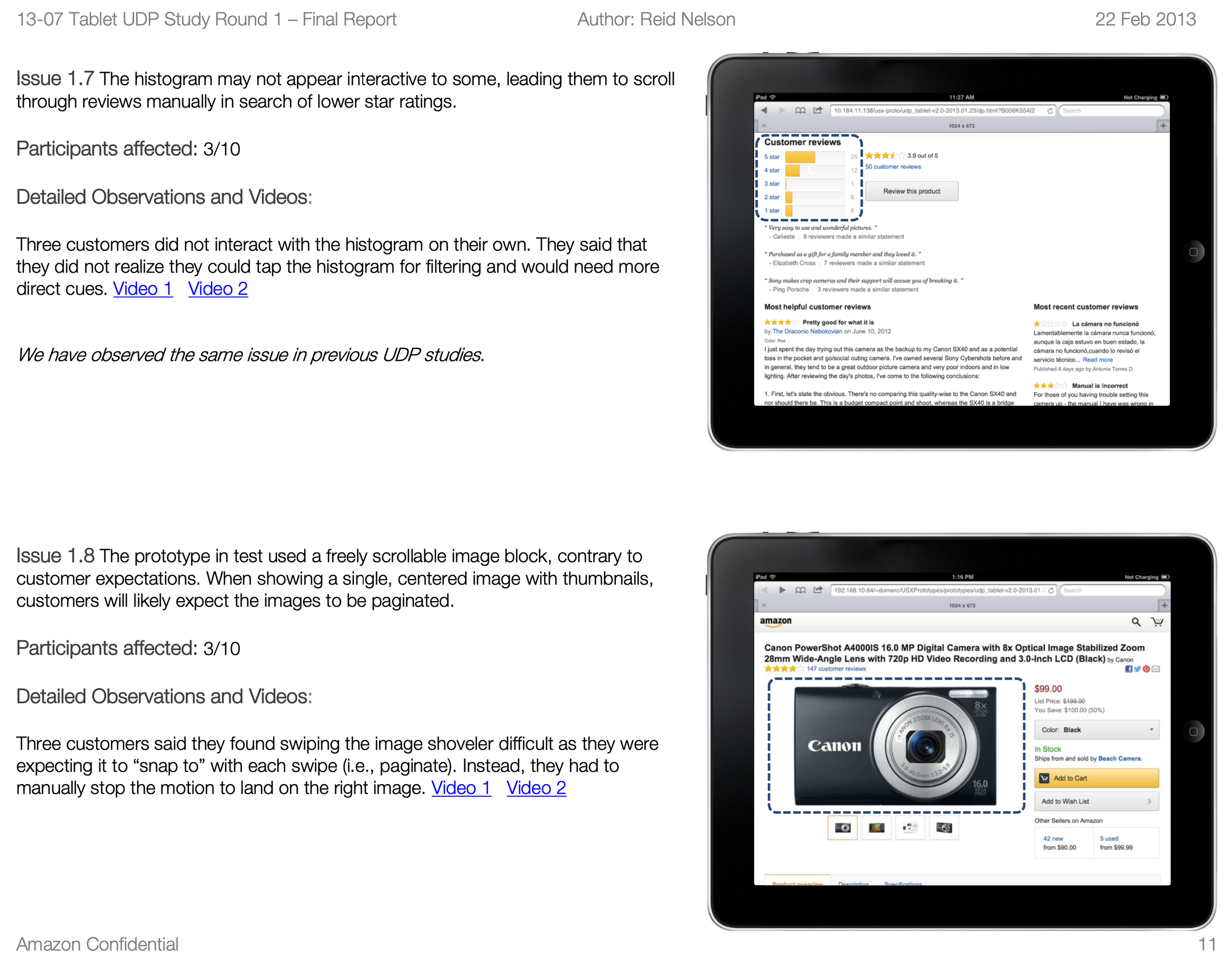

The team leveraged both evaluative and generative testing methods in order to ensure that the work we were doing was headed down the right path. The team held weekly RITE testing sessions that were open to our team and our partners on the UDP project. The team generally tested compilations of features from various areas of the product experience, leveraging a master template that we developed in order to track the total sum of features slotted into the page. RITE sessions also afforded us the ability to iterate on the issues we uncovered in a more collaborative fashion, since attendance usually involved designers and PMs from multiple areas of the product space; essentially what was being tested that day.

As with most retail enhancements at Amazon, any new features moving through the deployment process underwent multiple rounds of multivariate testing, mostly to determine the overall spot impact on ordered revenue. The team had a mandate to either meet or exceed the control before anything could be fully deployed (including deprecations), and so those numbers were closely tracked with each feature we completed. This process aided in feature refinement, but also ended up leading to some broader changes as well, both to our templates and the design system itself. More traditional UTests often occurred after launch as well, largely to gain better understanding of A/B results that were coming out of specific markets. Desktop tests were run in Europe and the UK, and mobile testing was done on site in Japan and India.

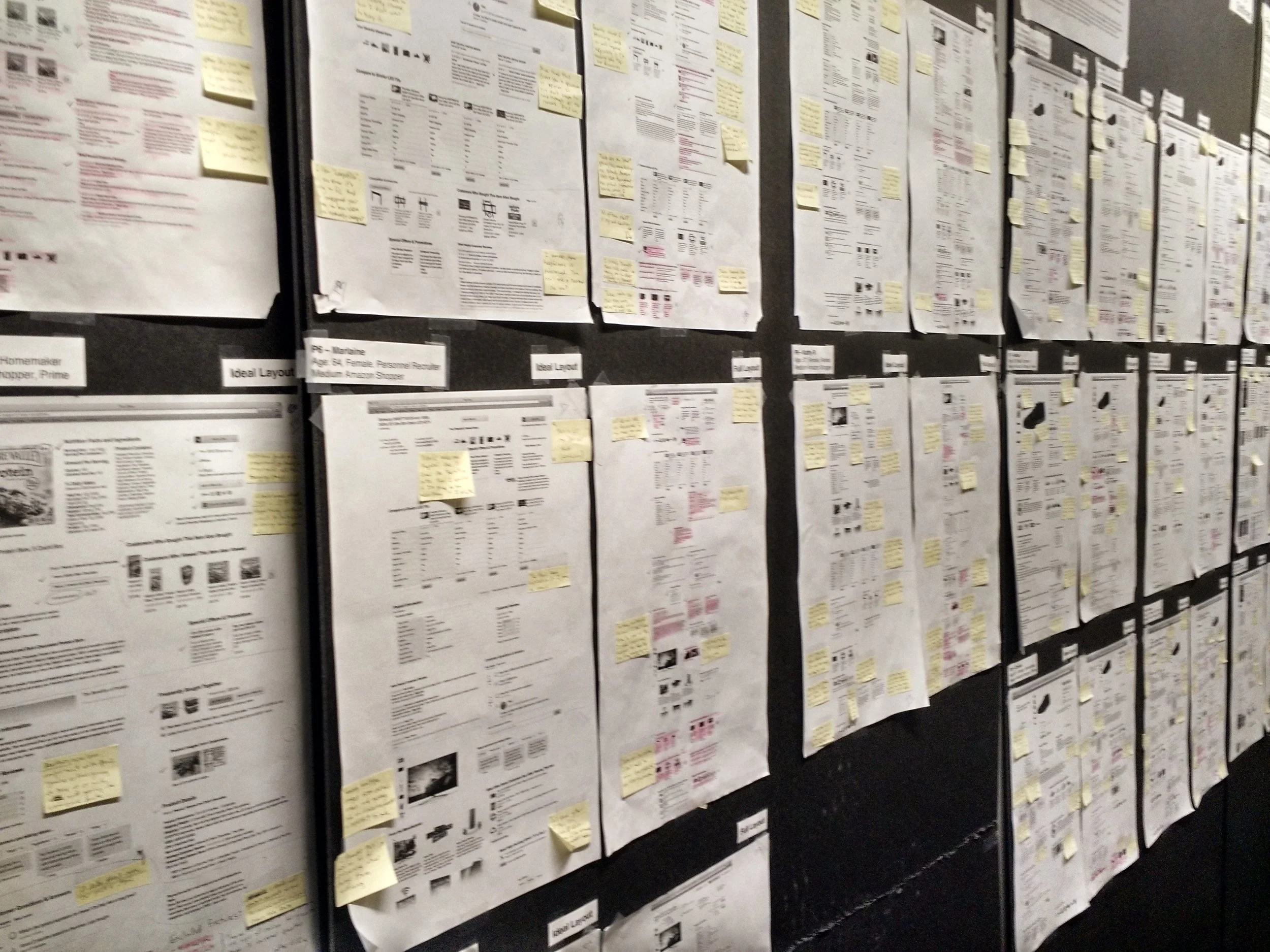

Architecture

In addition to evaluative testing, the team also ran a number of generative tests to tackle the architecture of our various detail page templates. The first round of research we did focused on a participatory activity, where customers were asked to lay out a quasi-randomly selected group of features into an “ideal” detail page layout for them. They were then interviewed on their choices so that we understood the rationale for what they did. This was followed up with a small card sorting study, and then a series of eye tracking studies once the team had a core set of templates developed from the previous rounds of inquiry.

Outcome

By the end of the year, my team had managed to lead the conversion, and consolidation of all of the features we had earmarked as necessary for the successful conversion of detail pages across Amazon. In addition to that, the team managed to cut the number of overall templates in use across the site experience in half, while also contributing the enhancement or creation of ~20 Amazon UI components, and the general component translation for both mobile and tablet-style devices. The conversion also vastly exceeded our targets for both latency reduction and incremental revenue, the later netting well over our parity target and millions over the team’s original stretch goal.

Another antecedent outcome to came in a much more collaborative culture around the design and engineering teams working across deal page experiences. Knowledge share, collaboration, and coordination was a lot more abundant given the relationships we’d established over the year doing the project. We also walked away with a much greater understanding of our partners as well, both through collaboration and the body of research we’d developed in partnership with those teams. It was those learnings, and the technical and cultural foundation that we had already established, that primed the team to confidently think more forward in the next bit of work we took on post-UDP, The Stage.